Most AI support for nonprofits starts with the technology. We start with leadership, because that's where the real work happens. How you protect trust, set boundaries, guide your team, and build governance that holds, that's what determines whether AI works for your organization or against it.

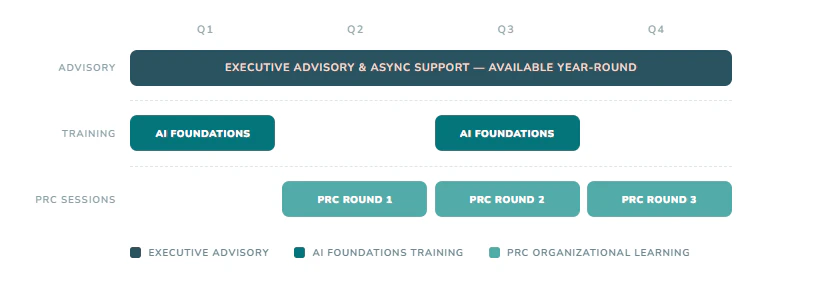

The Responsible AI Practice gives you an annual structure for doing that work, with a partner who understands the nonprofit context from the inside.

Nonprofit executives know AI holds real potential. They can see how it might advance their mission and give staff more time to focus on what matters most. But most don't have the time, capacity, or internal expertise to steward this moment well, let alone keep pace with a technology that keeps evolving.

They also know the risks are real. Donor data. Intellectual property. Equity. Bias. Staff burden. Reputational harm. The fear of falling behind or moving too fast without the right guardrails in place.